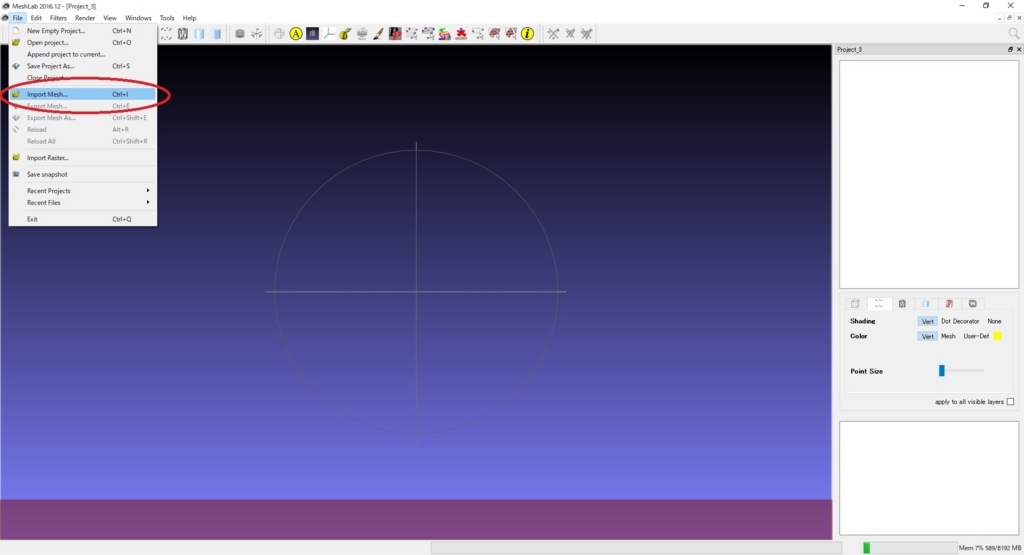

HS discretizes and approximates the continuous 3D light field, which greatly reduces the amount of data. Moreover, HS does not have the depth information in the scene space, but people can still perceive 3D clues, which depends on the binocular parallax effect. An HS cannot show all the information of the scene but is limited to a certain angle (less than 180°). Using discrete 2D images with parallax information as the input, the 3D reconstruction of a scene can be obtained after image processing, stereoscopic exposure, and development and fixing. HS is widely used in the military, publicity, commerce, and other fields. Holographic stereogram (HS) comprises a research hotspot in the field of three-dimensional (3D) display, providing a flexible and efficient means of 3D display. Analysis of experimental results shows that the proposed method can effectively realize augmented reality-holographic stereogram. The obtained scene model and virtual scene are rendered simultaneously to obtain the real and virtual fusion scene. First, the point cloud data is generated by VisualSFM software, and then the 3D mesh model is reconstructed by MeshLab software. In this paper, an augmented reality-holographic stereogram based on 3D reconstruction is proposed. It can reconstruct the light field information of real and virtual scenes at the same time, further improving the comprehensibility of the scene and achieving the “augmentation” of the scene. Holographic stereogram comprises a hotspot in the field of three-dimensional (3D) display. 3R and D Center for Intelligent Control and Advanced Manufacturing, Research Institute of Tsinghua University in Shen Zhen, Shen Zhen, China.2Center of Vocational Education, Army Academy of Armored Forces, Beijing, China.1Department of Information Communication, Army Academy of Armored Forces, Beijing, China.More images can be found here.Yunpeng Liu 1 †, Tao Jing 1 †, Qiang Qu 1, Ping Zhang 2, Pei Li 3, Qian Yang 4, Xiaoyu Jiang 1* and Xingpeng Yan 1* It took only 123 images to create 3D car below. To view the final model, you may use MeshLab. For further details on VisualSFM, please refer to Changchang Wu's web site. The time taken for this process may take several seconds to several hours depending on your number of photos, their quality and your system setup. The file can be opened in MeshLab for further processing. Dense Reconstruction (CVMS), where the point cloud is going to be formed.After Sparse Reconstruciton all the images, Each image and recognized features (detected edges) will have different color. This step is quicker comparing to the dense reconstruction.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed